Article originally published on Substack on May 4, 2026.

Why approved AI can still behave outside policy intent

Much of the early concern around enterprise AI focused on Shadow AI: unsanctioned tools operating outside policy, visibility, and control. Implicit in that concern was a reassuring assumption that once organizations moved from ad hoc experimentation to approved enterprise platforms, much of the underlying risk would recede. If the platform was sanctioned and governed, the harder security questions would presumably become more manageable.

That assumption deserves closer examination.

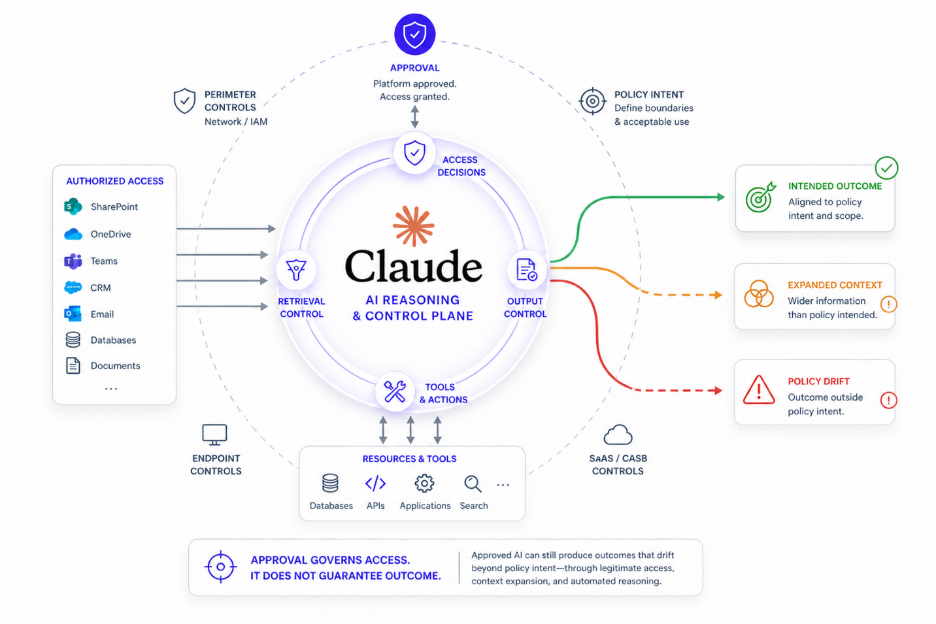

As enterprises adopt systems such as Claude Enterprise, a different governance challenge is becoming visible. In some respects, sanctioned AI can create harder questions precisely because it is trusted, broadly connected, and designed to participate deeply in ordinary workflows. The issue is not that approved AI becomes rogue. It is that approved AI can still produce outcomes that policy did not intend, often through interactions that appear legitimate through traditional control assumptions.

That is close to the heart of what I have called Shady AI.

And connected AI is making it harder to ignore.

When Trusted Workflows Drift Outside Policy Intent

Trust can obscure the need for governance. Once a platform is approved, there is a tendency to assume its behavior is governed by default. Approval begins to stand in for control.

Connected AI complicates that assumption.

A user may invoke Claude to retrieve information from enterprise repositories, analyze customer materials, generate content, or support downstream decisions. Each step may be individually authorized. The workflow may look ordinary, even beneficial. Yet the aggregate outcome may still drift outside what policy intended.

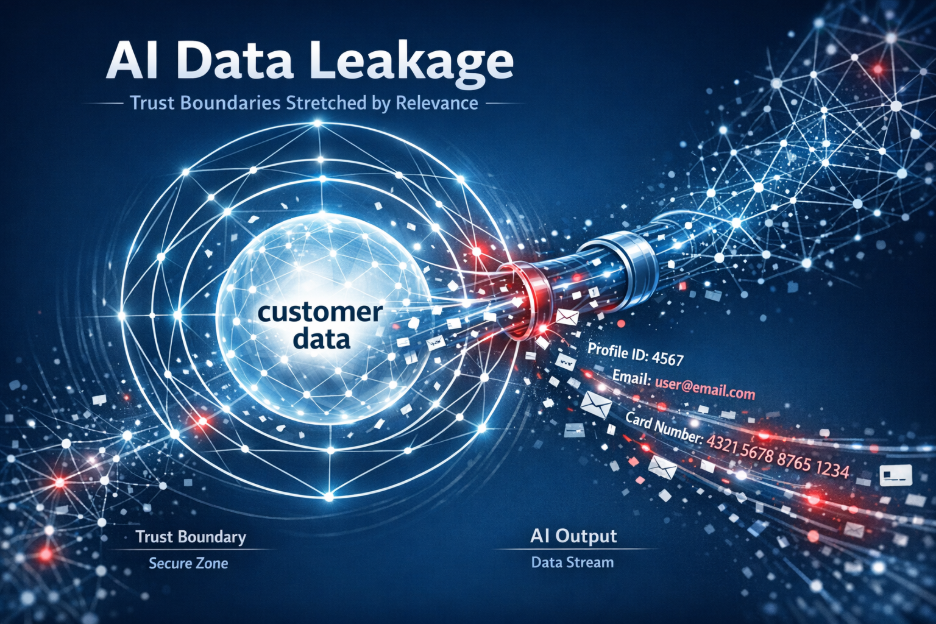

Sensitive context may be drawn into generated responses too broadly. Information tied to one customer relationship may be combined with other context in ways a policy would have constrained if evaluated explicitly. Automated reasoning may act on access that is technically permitted but insufficiently scoped for AI-mediated use.

These are not classic misuse scenarios. Risk can emerge from the interaction of legitimate access, incomplete contextual understanding, and increasingly capable automation.

The challenge is not simply whether the workflow was authorized, but whether its outcome remained aligned to policy intent.

That is a different governance problem.

Why Approval Is Not the Same as Governance

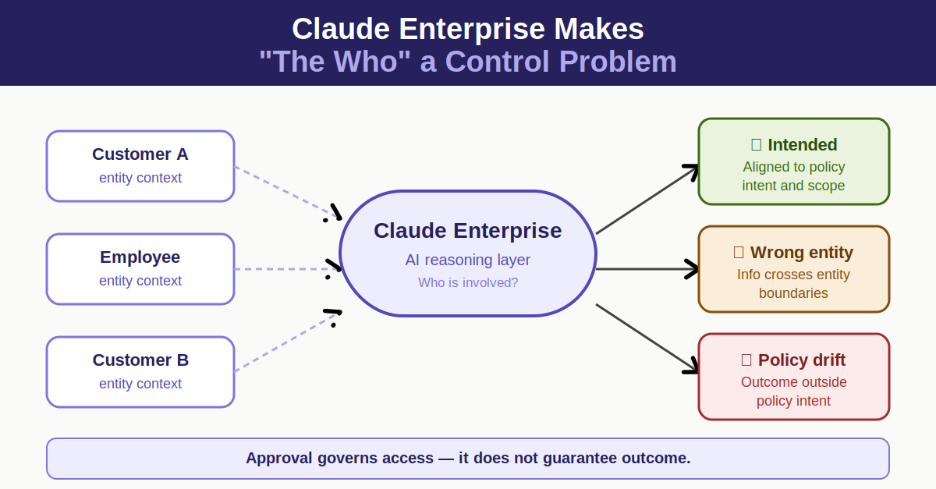

Part of what Claude Enterprise helps expose is a distinction many organizations have not had to confront directly.

Approval and governance are not the same thing.

Security models have often treated them as though they largely travel together. If a platform is trusted and sanctioned, governed outcomes are presumed to follow.

Connected AI puts pressure on that assumption.

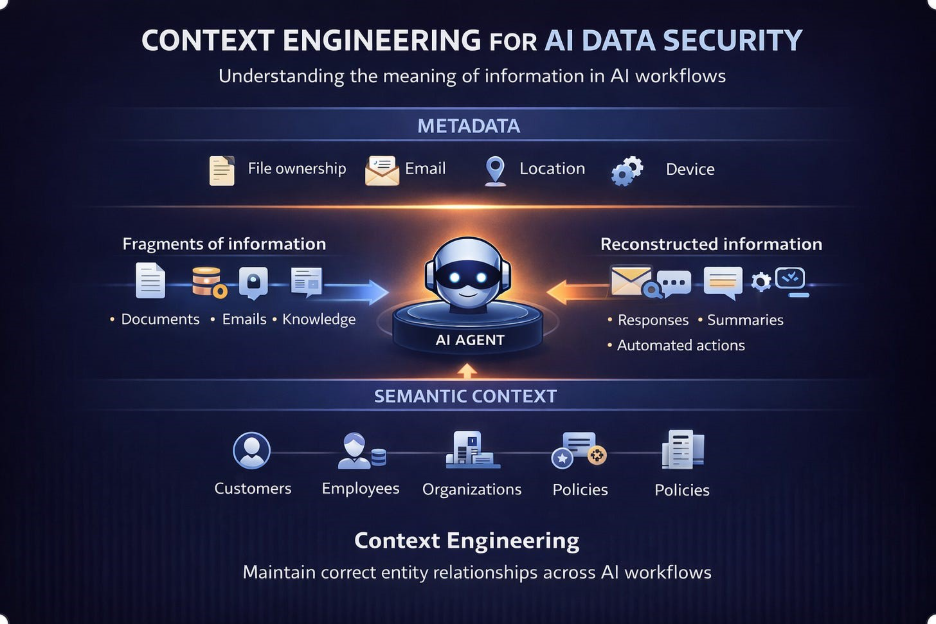

The relevant question is no longer only whether the platform itself is authorized. It is whether the use of enterprise data through that platform remains aligned to policy intent across dynamic reasoning workflows.

One concerns trusting the system.

The other concerns governing how the system uses data.

That distinction matters because AI-mediated workflows can create outcomes outside intended policy boundaries without any obviously unauthorized act occurring.

One implication is difficult to avoid: authorized AI can still produce unauthorized outcomes.

Not because the platform is untrustworthy, but because trust in the platform does not by itself govern how data is used once reasoning and automation operate at scale.

That may prove to be one of the more consequential lessons of this phase of AI adoption.

Why This Is a Shady AI Problem

This is also where the distinction between Shadow AI and Shady AI becomes more consequential.

Shadow AI has often been treated as a visibility problem: unsanctioned tools beyond governance.

Shady AI points to something different: approved systems behaving in ways that quietly drift from business intent, compliance expectations, or customer trust, not through overt policy violation, but through insufficiently governed use.

Connected AI broadens that concern because some consequential risks may emerge not at the edges of sanctioned use, but inside trusted workflows themselves.

That is not a Shadow AI problem in the traditional sense.

It is a governance problem operating within approved systems.

And solving one does not necessarily solve the other.

An organization may materially reduce unsanctioned AI use and still face difficult questions about whether approved AI workflows remain operating within intended policy boundaries.

Claude does not create that issue.

But it helps make it visible.

When Governance Must Move Beyond Approval

In the first article in this series, I argued that Claude Enterprise is pressure testing where data security controls operate. In the second, I argued it is pressure testing what those controls need to understand.

There may be a third implication.

Claude Enterprise is also pressure testing the assumption that trusted AI is necessarily well-governed AI.

That assumption may not hold as cleanly as many expect.

And that is why this is not only a story about AI risk. It is a story about governance maturity.

As connected AI becomes embedded in ordinary enterprise workflows, the harder challenge may not be identifying unauthorized AI. It may be governing authorized AI well enough that it does not become Shady AI.

That may prove to be one of the defining data security questions of this next phase of enterprise AI adoption.

And one increasingly difficult to ignore.